Exploring results

Understand and compare your profiling and benchmarking results.

When you profile a model through Embedl Hub, the compiled model is deployed to a real device and measured for latency, memory usage, and per-layer performance. This guide explains how profiling works, how to read the results, and how to organize your runs for effective comparison.

How profiling works

When you profile a model using a cloud provider (such as qai-hub or aws),

the following happens:

- The compiled model is uploaded to the cloud.

- A target device is provisioned (the job is queued if all devices are busy).

- The model is transferred to the target device.

- The model is profiled using the target runtime, measuring various performance metrics.

- Results are made available on the run details page.

When using a your hardware provider (such as embedl-onnxruntime or trtexec), the model is transferred to your device

over SSH and profiled directly — no queuing or cloud provisioning involved.

Reading the results

After a profiling run completes, open the run details page from the link printed in the CLI output or from your project’s page on the Hub.

Summary metrics

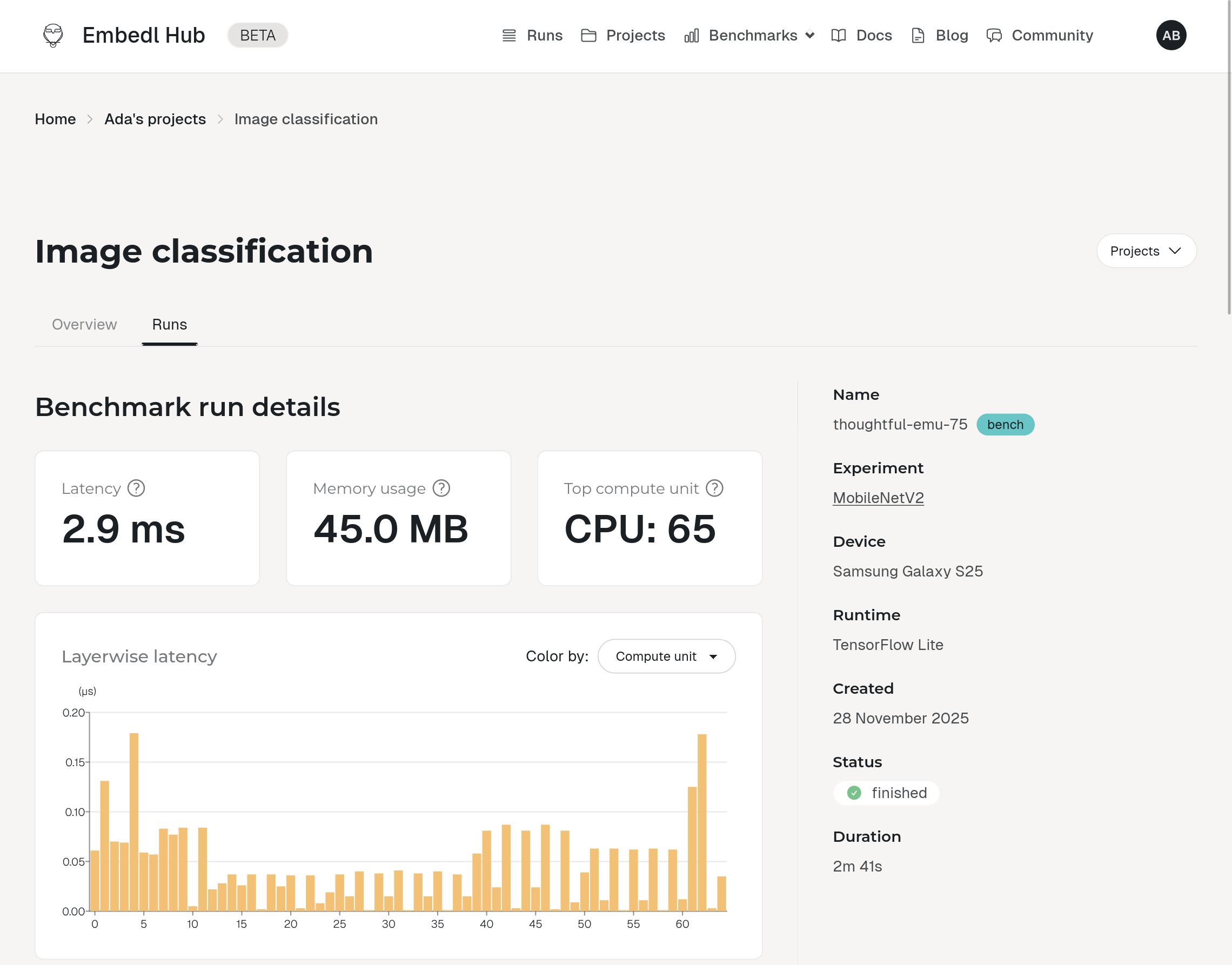

The top of the page shows three headline numbers:

- Latency — the average time for a single inference on the target device, in milliseconds (ms).

- Memory usage — peak memory consumption during inference, in megabytes (MB).

- Top compute unit — the compute unit (CPU, GPU, or NPU) that executed the most layers.

Layerwise latency

The layerwise latency chart breaks down latency by individual layer in topological order, showing where time is spent across the model.

Hover over a bar to see the layer name and latency. Click a bar to highlight the corresponding row in the model summary table below.

Operation type breakdown

The operation type pie chart has two modes:

- Latency — total latency contributed by each operation type.

- Layer count — number of layers of each type.

Compute units

A breakdown of the number of layers executed on each compute unit (CPU, GPU, NPU). This helps you understand how effectively the model leverages the available hardware — for example, whether compute-heavy layers are running on the NPU or falling back to the CPU.

Viewing your runs

Every compile, profile, and invoke operation is recorded as a run. Each run is stored locally in the artifact directory and automatically synced to your Hub page, where you can inspect results, compare runs side-by-side, and share them with your team.

On the Hub page

Open your project on hub.embedl.com to see a timeline of all runs. Each run shows the component type (compiler, profiler, invoker), status, device, metrics such as latency and memory usage, and the artifacts that were produced. You can click into any run to see layer-by-layer breakdowns and download compiled models.

From the CLI

Use embedl-hub log to list recent runs directly in your terminal:

embedl-hub log reads runs from the artifact directory configured with embedl-hub init --artifact-dir. It only shows runs stored in that

directory, so if you used a different artifact directory for some runs — or

created runs via the Python API with a custom path — they won’t appear

unless you point --artifact-dir to the same location.

Each entry shows the run ID, model name, component type, timestamp, status, and a direct link to the run on your Hub page:

Run embedl-hub log --help for the full list of options.

Naming and tagging runs

Run names

Every run gets a name that appears in the Hub. By default the name

is the component class name (e.g. TFLiteCompiler). You can override it

to make runs easier to identify:

Pass --run-name (or -rn) to any command:

Tags

You can attach tags to runs as key-value pairs. Tags are useful for organizing runs by model variant, dataset, experiment, or any other dimension you care about.

Pass one or more --tag flags to any compile or profile command:

Benchmark view

On the Hub page, tags appear on each run’s detail page and power the Benchmarks view. The benchmarks page collects all runs in a project

and plots them in a scatter chart where you can color-code data points

by any tag — for example, coloring by model to compare latency across

model variants, or by dataset to see how calibration data affects

accuracy. You can also filter runs by tag values to focus on specific

experiments.

This makes it easy to answer questions like:

- Which model variant is fastest on a given device?

- How does INT8 quantization compare to FP16 across different devices?

- Does my calibration dataset improve accuracy without hurting latency?